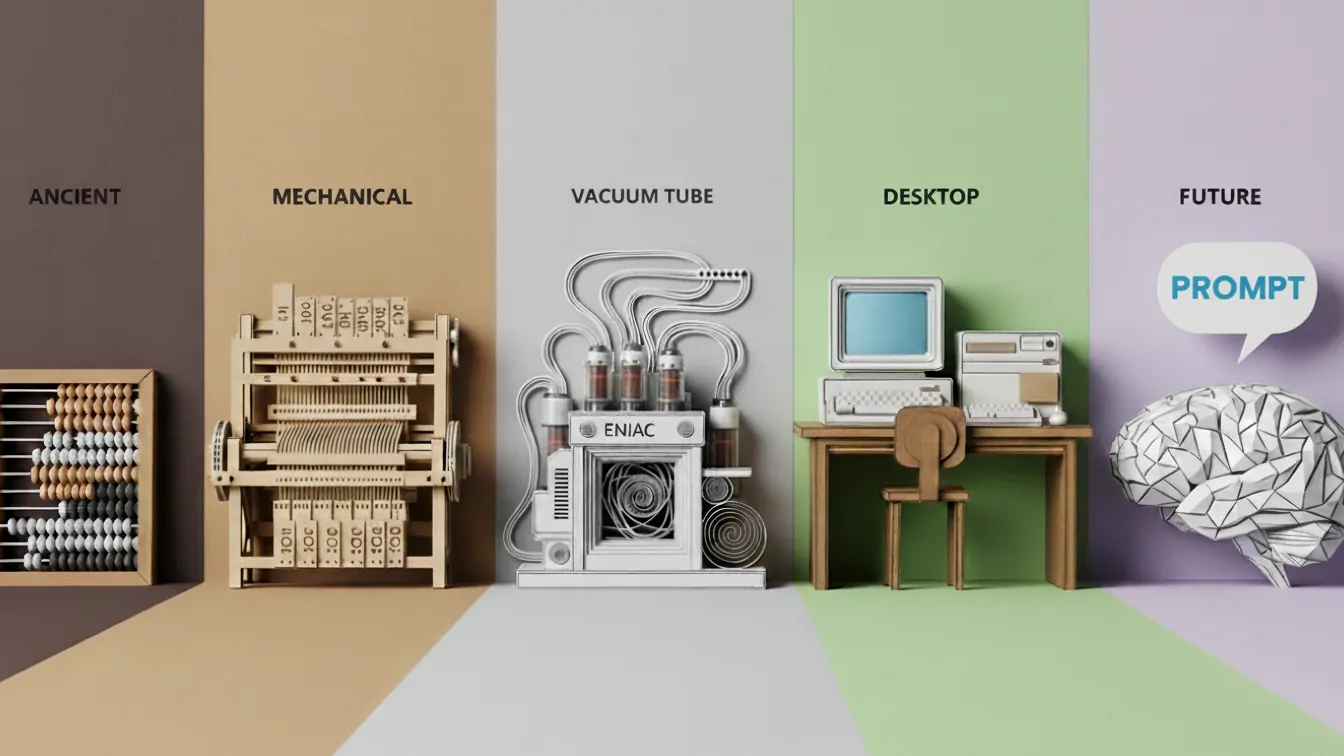

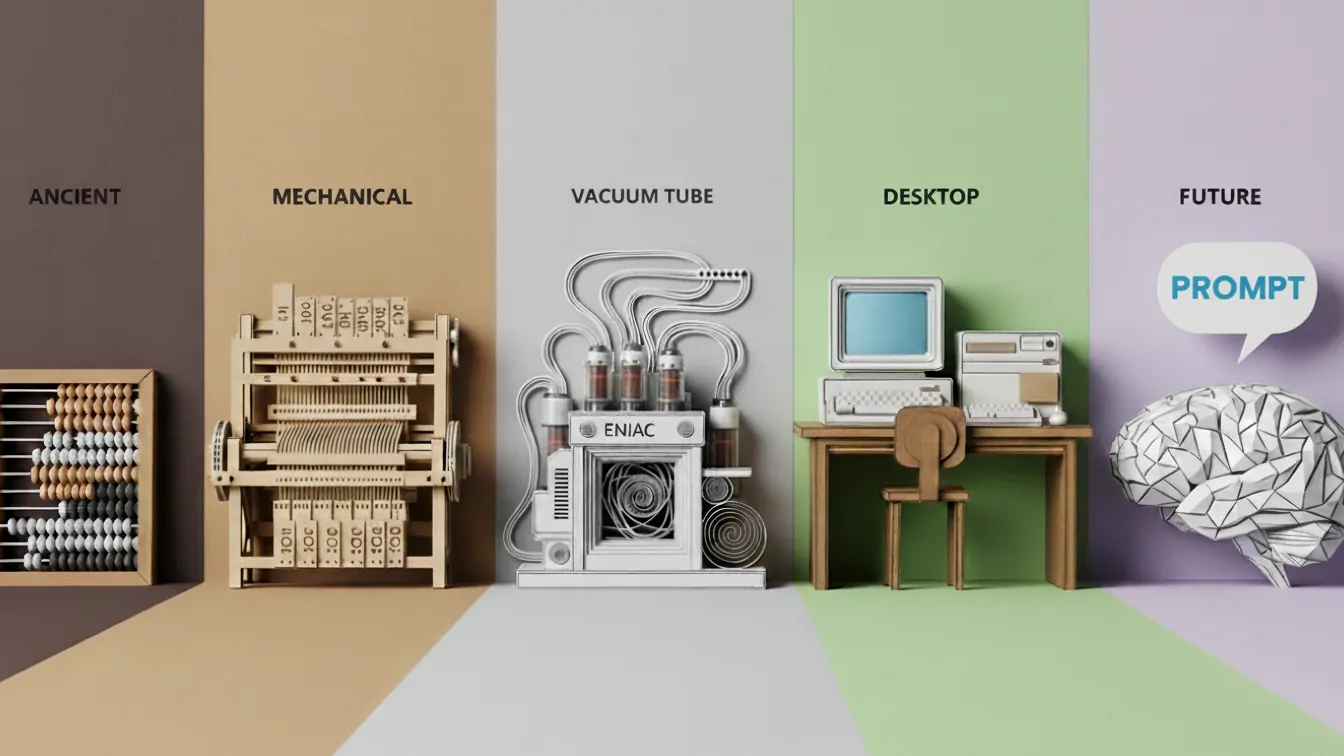

For the last fifty years, "learning to code" meant learning to speak the language of machines. We spent years mastering the arcane grammar of braces, semicolons, and memory pointers. We learned that computers are literal genies that do exactly what you say, often with disastrous results if you miss a single character.

“Prompt Engineering isn't the end of programming. It is simply the highest level of abstraction we have ever reached. It is coding in prose.”

But with the rise of Large Language Models (LLMs), the barrier between human intent and machine execution has dissolved. We no longer need to translate our thoughts into Python or C++ to make a computer do work. We just ask. This has led to a common misconception that "Prompt Engineering" is a soft skill. It is often seen as a kind of "AI whispering" that relies more on vibes than logic.

In traditional programming, we write source code, pass it through a compiler or interpreter, and get binary execution. It is deterministic. Input A always leads to Output B. In the new paradigm, the LLM is the compiler. You feed it English source code, and it generates behavior. The difference is that this compiler is probabilistic.

Think of the chat context window as your application's memory. When you start a new chat, you are initializing an empty environment. The "System Prompt" is analogous to setting your Global Environment Variables.

Bad Prompt (Undefined Variables): "Write a marketing email." This is like calling a function with null arguments. The model defaults to generic, vanilla behavior.

Developer Prompt (Strict Typing): "You are a Senior Copywriter [Role]. Your audience is CTOs of Fortune 500 companies [Target]. The tone is professional but urgent [Style]. The product is a cybersecurity SaaS [Context]."

We are instantiating the object with properties. We are defining the scope. By explicitly setting these variables, we narrow the search space of the model. It works just like type constraints in TypeScript prevent a function from accepting the wrong kind of data.

One of the most powerful techniques in prompt engineering is "Few-Shot Prompting." This is where you give the AI examples of what you want before asking it to perform the task. To a developer, this should look familiar. It is Test-Driven Development (TDD) combined with Function Definition.

You aren't just asking the model to "guess." You are defining the transformation logic by providing valid input/output pairs. You are literally passing the unit tests into the function to teach it how to behave.

When an LLM fails a complex math or logic problem, it is often because it tried to predict the answer token immediately. It essentially tried to return a value without running the function body.

Chain of Thought prompting involves asking the model to "think step by step" and forces the model to execute an algorithm.

It forces the model to allocate computation to intermediate steps and creates a "stack trace" of logic that it can reference before generating the final output. As developers, we know that breaking a monolithic function into smaller, readable sub-routines reduces bugs. Telling an AI to "think step by step" is the prose equivalent of refactoring a complex function into manageable steps.

When code crashes, we get a stack trace. When an LLM "crashes," it hallucinates. It confidently makes up a lie. Debugging a prompt requires the same analytical skills as debugging code.

The "fix" often looks like a patch note. You move from "Summarize this text" to "Summarize this text using only the provided source material." This adds a constraint to prevent errors.

The syntax has changed from a for loop to "Repeat this for every item." But the underlying engineering principles are more critical than ever. These include modularity, clarity, constraints, and edge-case handling.

We aren't just writing sentences. We are architecting intelligence. So, the next time you write a prompt, don't just chat. Code.

Web developer by trade, writer by passion, and data analyst by curiosity. I spend my downtime benchmarking the latest AI models, analyzing their evolution and dissecting how they are reshaping our future.